This blog aims to outline the Disaster Recovery Operational procedures for Oracle 19c Database with Multi-Tenant Architecture single PDB for EBS Database

Standby Database

A standby database is a transactionally consistent copy of the primary database. Using a backup copy of the primary database, you can create up to 30 standby databases and incorporate them in an Oracle Data Guard configuration.

There are three types of standby database:

- Physical standby — Provides a physically identical copy of the primary database, with on-disk database structures that are identical to the primary database on a block-for-block basis. The database schema, including indexes, is the same. A physical standby database is kept synchronized with the primary database by recovering the redo data received from the primary. This document details the steps for setting up a physical standby database.

- Logical standby — Contains the same logical information as the primary database, although the physical organization and structure of the data can be different. It is kept synchronized with the primary database by transforming the data in the redo logs received from the primary database into SQL statements and then executing the SQL statements on the standby database. Logical standby databases are not supported with Oracle E-Business Suite standard functionality.

- Snapshot standby — A fully updatable standby database. Like a physical or logical standby database, a snapshot standby database receives and archives redo data from a primary database. However, unlike a physical or logical standby, a snapshot standby does not apply the redo data it receives. This means it will be out of sync with the primary, so using a snapshot standby for disaster recovery risks recovery being incomplete.

Oracle Data Guard

Oracle Data Guard is a set of services that create, manage, and monitor one or more standby databases to enable a primary database to survive disasters and data corruption. If the primary database becomes unavailable due to a planned or an unplanned outage, Oracle Data Guard can switch a standby database to the primary role, minimizing the downtime.

Oracle Data Guard offers three modes of data protection:

- Maximum Protection — This mode offers the highest level of data protection and guarantees no data will be lost. Data is synchronously transmitted from the primary database to one or more standby databases, and transactions are not committed on the primary database unless the redo data is available on at least one standby database configured in this mode. If the last standby database configured in this mode becomes unavailable, processing stops on the primary database.

- Maximum Availability — This mode is similar to the maximum protection mode, including a guarantee of no data loss. However, if a standby database becomes unavailable (for example, due to network connectivity problems), processing continues on the primary database. After the fault is corrected, the standby database is resynchronized with the primary database. However, if there is a need to fail over before the standby database is resynchronized, some data may be lost.

Maximum Performance — This mode offers slightly less data protection on the primary database, but higher performance than maximum availability mode. In maximum performance mode, as the primary database processes transactions the redo data is asynchronously shipped to the standby database. The commit operation on the primary database does not wait for the standby database to acknowledge receipt of redo data before completing write operations on the primary database. If any standby destination becomes unavailable, processing continues on the primary database, and there is little effect on primary database performance.

Oracle Data Guard Broker

The Oracle Data Guard broker is a distributed management framework that automates and centralizes the creation, maintenance, and monitoring of Data Guard configurations. It logically groups the primary and standby databases into a broker configuration that allows the broker to manage and monitor them together as an integrated unit.

The Data Guard broker consists of the following three components:

- Data Guard graphical user interface (GUI) through Enterprise Manager

- Data Guard command-line interface (DGMGRL)

- Data Guard monitor

The broker automates and simplifies the following operations:

- Standby database creation — The Oracle Enterprise Manager wizards automate and simplify the steps required to create a configuration with an Oracle database on each site, including creating the standby control file, online redo log files, data files, and server parameter files.

Note: This document contains specific steps to set up a physical standby with Oracle E-Business Suite for both Oracle RAC and single-node databases.

- Role transitions — This simplifies the switchover and failover process, including automatically setting up redo transport and log apply services, and automating failover.

Note: Fast-start failover is currently not supported with Oracle E-Business Suite.

- Monitoring — This provides continuous monitoring of the configuration health, database health, and other runtime parameters.

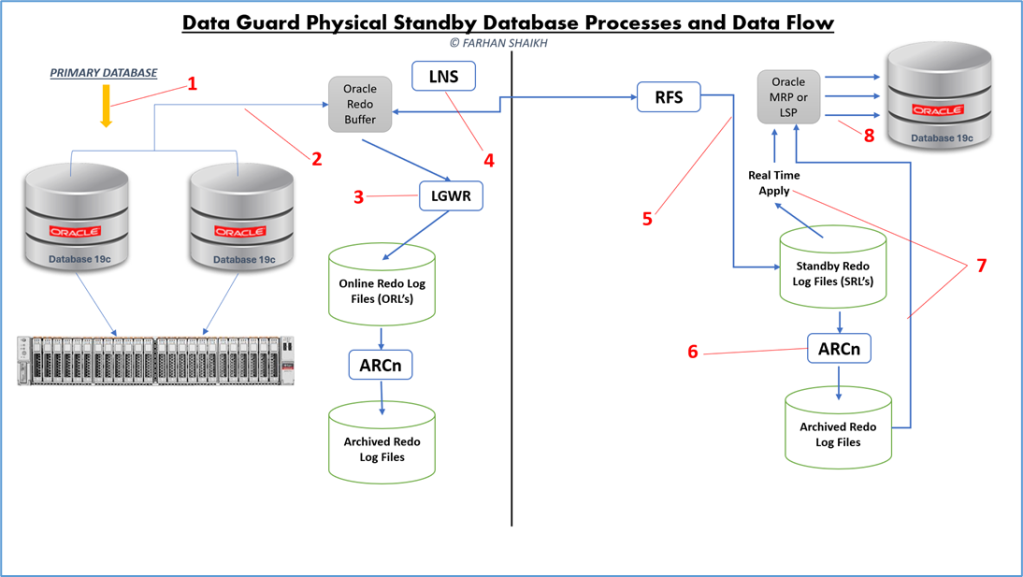

Typical Oracle Data Guard Flow

1) On Primary : Transactions start on Primary and all the buffer cache locks (exclusive locks) that are required for the transaction are acquired.

2) On Primary : The redo blocks that hold the changes (or change vectors) are generated and stored in the processes PGA. After successfully acquiring the redo allocation latch, space is then allocated in the redo log buffer. The redo generated then gets copied from the processes PGA into the redo log buffer.

3) On Primary : Oracle foreground process tells the LGWR to flush the redo log buffers to disk. Remember that the database blocks in the database have not yet been updated with DML changes. The LGWR flushes the redo buffers to the ORL and acknowledges the completion to the session. At this point, the transaction is persistent on disk. No commit has occurred so far.

4) On Primary : the LNS process reads the recently flushed redo from the redo log buffer and sends the redo data to the standby database using the redo transport destination (LOG_ARCHIVE_DEST_n) that we defined during standby database creation. We are using ASYNC transport method, so the LGWR does not wait for any acknowledgment from the LNS for this network send operation. It does not communicate with the LNS except to start it up at the database start stage and after a failure of a standby connection.

5) On Standby : The RFS reads the redo stream from the network socket into the network buffers, and then it writes this redo stream to the SRL.

6) On Standby : The ARCH process archives the SRLs into archive log files when a log switch occurs at the primary database. The generated archive log file is then registered with the standby control file.

7) On Standby : The actual recovery process starts from this step. The managed recovery process (MRP) will asynchronously read ahead the redo from the SRLs or the archived redo logs (when recovery falls behind or is not in real-time apply mode). The blocks that require redo apply are parsed out and placed into appropriate in-memory map segments.

8) On Standby: the MRP process ships redo to the recovery slaves using the parallel query (PQ) inter-process communication framework. Parallel media recovery (PMR) causes the required data blocks to be read into the buffer cache, and subsequently redo will be applied to these buffer cache buffers. At the checkpoint phase, the recently modified buffers (modified by the parallel recovery slaves) will be flushed to disk and update of datafile headers to record checkpoint completion.

Background Processes On the Primary Database:

LGWR : The log writer process flushes log buffers from the SGA to Online Redo Log files.

LNS : The Log Writer Network Service (LNS) reads the redo being flushed from the redo buffers by the LGWR and sends the redo over network to the standby database. The main purpose of the LNS process is to free up the LGWR process from performing the redo transport role.

ARCH : The archiver processes archives the ORL files to archive log files. Up to 30 ARCH processes can exist, and these ARCH processes are also used to fulfill gap resolution requests. Note that one ARCH process has a special role in that it is dedicated to local redo log archiving only and never communicates with a standby database.

Background Processes On the Standby Database:

RFS : The main objective of the Remote File Server process is to perform a network receive of redo transmitted from the primary site and then writes the network buffer (redo data) to the standby redo log (SRL) files.

ARCH : The archive processes on the standby site perform the same functions performed on the primary site, except that on the standby site, an ARCH process generates archived log files from the SRLs.

MRP : The managed recovery process coordinates media recovery management. Remember that a physical standby is in perpetual recovery mode.

Basically, we can categorize physical standby database into three major components:

1) Data Guard Redo Transport Services

– To transfer the redo that is generated by the primary database to the standby database. (Point 4 and 5 in the above diagram are where Redo Transport works.)

2) Data Guard Apply Services

– To receive and apply the redo sent by Redo Transport Services to the standby database. (Point 7 and 8 in the above diagram are where Redo Apply works.)

3) Data Guard Role Management Services

– To assist in the database role changes in switchover and failover scenarios.

This service works in the background and takes care of switchover/failover scenarios.

ORACLE STANDBY BUILD PROCEDURE

| Task | Command Reference |

| Backup PRIMARY pfile and copy it to Standby server | PRODUCTION:oraprod@PRIM_DB_N1:scp -rp * oraprod@SBY_DB_N1:/Stage/PRDSBY Authorized users only. All activity may be monitored and reported. Password: pfile_PRIM_DB_N1_cdb_21082023.ora 100% 4550 550.7KB/s 00:00 pfile_PRIM_DB_N1_pdb_21082023.ora 100% 957 127.9KB/s 00:00 PRODUCTION:oraprod@PRIM_DB_N1: |

| Enable Forced Logging on PRIMARY | Set the environment to the Container Database: $ source <RDBMS_ORACLE_HOME>/<cdb_name>.env Use the following SQL command to set FORCE LOGGING on the primary database: SQL> ALTER DATABASE FORCE LOGGING; |

| Configure Oracle Net Communication to and From the Standby System on PRIMARY | Standby LISTENER.ORA file when server is running as standby # listener.ora Network Configuration File: /u01/app/oracle/product/19.0.0/network/admin/listener.ora # Generated by Oracle configuration tools. # # $Header: listener_ora_cdb_db19_rac.tmp 120.0.12020000.1 2019/03/25 07:46:16 lmanda noship $ # # +===========================================================================+ # | Copyright (c) 2016, 2019 Oracle Corporation, Redwood Shores, California, USA | # | All rights reserved | # | Applications Division | # +===========================================================================+ # # $AutoConfig$ # #################################### # Definition for database listener #################################### CDBPRD = (DESCRIPTION_LIST = (DESCRIPTION = (ADDRESS = (PROTOCOL = TCP)(HOST = PRIM_DB_N1-vip.corp.prod.com)(PORT = 1529)) (ADDRESS = (PROTOCOL = IPC)(KEY = EXTPROC1529)) ) ) TRACE_DIRECTORY_CDBPRD = /u01/app/oracle/product/19.0.0/network/admin STARTUP_WAIT_TIME_CDBPRD = 0 TRACE_FILE_CDBPRD = CDBPRD TRACE_LEVEL_CDBPRD = OFF LOG_FILE_CDBPRD = CDBPRD ADMIN_RESTRICTIONS_CDBPRD = ON SUBSCRIBE_FOR_NODE_DOWN_EVENT_CDBPRD = OFF USE_SID_AS_SERVICE_CDBPRD = ON LOG_DIRECTORY_CDBPRD = /u01/app/oracle/product/19.0.0/network/admin CONNECT_TIMEOUT_CDBPRD = 10 |

| TNS Aliases | For single-node, the standby and primary alias must have the physical hostname or VIP name. Configuration of TNS Aliases for Oracle RAC Database The TNS alias requirements are different for Oracle RAC. If you are not using SCAN, then all the physical or VIP host name should be used for the primary alias CDBPROD and standby alias, which is CDBSTDBY in this example. When the production and standby are connected using SCAN Listeners, the TNS alias must use the actual DNS-registered SCAN name at their respective sites. These must be specified when configuring TNS aliases for redo-transmission between primary and standby databases. Create <CDBNAME>_<NODE>_ifile.ora under $ORACLE_HOME/network/admin directory and add TNS aliases as example shown in the following code block. Also add <SID>_LOCAL alias with physical host names to this IFILE. CDBPRDSBY=(DESCRIPTION= (ADDRESS_LIST= (ADDRESS=(PROTOCOL=tcp)(HOST=SBY_DB_N1.corp.prod.com)(PORT=1529))) (CONNECT_DATA= (SERVICE_NAME=CDBPRD) (UR=A) ) ) |

| Configure Secure Connections on PRIMARY | $ cd $ORACLE_HOME/dbs $ orapwd file=$ORACLE_HOME/dbs/orapwCDBPRD password=M4n4g3r# entries=10 force=yes ignorecase=y PRODUCTION:oraprod@PRIM_DB_N1:scp -rp orapwCDBPRD oraprod@SBY_DB_N1:/u01/app/oracle/product/19.0.0/dbs Authorized users only. All activity may be monitored and reported. Password: orapwCDBPRD 100% 6144 1.5MB/s 00:00 PRODUCTION:oraprod@PRIM_DB_N1 |

| Set the PRIMARY Database Initialization Parameters | log_archive_dest_1 log_archive_dest_2 log_archive_dest_state_2 log_archive_format log_archive_min_succeed_dest log_archive_config db_unique_name fal_server fal_client standby_file_management db_file_name_convert, log_file_name_convert Remote_login_passwordfile set linesize 500 pages 500 col value for a90 col name for a50 select name, value from v$parameter where name in (‘db_name’,’db_unique_name’,’log_archive_config’, ‘log_archive_dest_1′,’log_archive_dest_2’, ‘log_archive_dest_state_1′,’log_archive_dest_state_2’, ‘remote_login_passwordfile’, ‘log_archive_format’,’log_archive_max_processes’,’fal_server’,’db_file_name_convert’, ‘log_file_name_convert’, ‘standby_file_management’,’fal_server’,’fal_client’); log_archive_dest_3=’SERVICE=PRDSBY NOAFFIRM ASYNC VALID_FOR=(ONLINE_LOGFILES,PRIMARY_ROLE) DB_UNIQUE_NAME=PRDSBY’ The following example shows the relevant initialization parameters of the primary database: db_unique_name = CDBPROD — You need to change this to the standby db_unique_name (such as dg19s) when you copy this file to the physical standby. log_archive_dest_1 =”LOCATION=/arch1/CDBPROD/ MANDATORY” log_archive_dest_2 =”SERVICE=CDBSTDBY LGWR ASYNC=20480 DB_UNIQUE_NAME=CDBSTDBY OPTIONAL REOPEN=15 MAX_FAILURE=10 NET_TIMEOUT=30″ log_archive_config=’dg_config=(CDBPROD,CDBSTDBY)’ log_archive_min_succeed_dest = 1 standby_file_management = AUTO Remote_login_passwordfile = exclusive log_archive_format = <name>%s_%t_%r. <ext> —- Or you can just leave it set to the default. # db_file_name_convert: —- you do not need to specify this if you use the same directory structure # log_file_name_convert: —- you do not need to specify this if you use the same directory structure fal_server = CDBPROD log_file_name_convert = xx,xx —- Specify dummy values to trigger log clearing |

| Enable Archive Logging on the PRIMARY System | Already enabled on PRIMARY database |

| Add Standby Redo Logs (SRL’s) on PRIMARY Database | Redo data transmitted from the primary database is received by the remote file server (RFS) process on the standby system. The RFS process writes the redo data to archived log files or standby redo log files. Redo data can be applied either after the redo is written to the archived redo log file or standby redo log file, or, if real-time apply is enabled, directly from the standby redo log file as it is being filled. Standby redo logs are required if you want to use, for example, maximum protection and maximum availability levels of data protection, or real-time apply. A best practice is to add them to both the primary and the standby database so that switchover between the environments is quicker and easier. In this case, you will add them to production, so that they are in place and will be cloned to the standby. There are two considerations: On the primary — The standard Oracle recommendation is to multiplex redo logs on the primary when using normal redundancy, but not when using ASM with high redundancy. On the standby — Standby redo log files can be multiplexed using multiple members, thereby improving reliability over archived log files. However, multiplexing redo logs adds more I/O overhead that could impact the standby’s redo apply rate, given that multiplexing generates N*3 writes write I/Os (where N is the number of multiplex redo logs). This is why some references state “Do not multiplex the standby redo logs”. Given that there are several factors to consider, you should test in advance whichever solution you decide to implement. As a general rule, follow the same best practice as for the primary for online redo logs: Create standby redo log files with the same sizes, groups and number of members as on the primary. The standby redo log must have at least one more redo log group than the redo log at the redo source database, for each redo thread at the redo source database. That is to say: number of standby redo logs = the number of redo log groups in the primar + 1. SQL> select b.thread#, a.group#, a.member, b.bytes/1024/1024 FROM v$logfile a, v$log b WHERE a.group# = b.group#; PRODUCTION:oraprod@PRIM_DB_N1: sqlplus / as sysdba SQL*Plus: Release 18.0.0.0.0 – Production on Tue Aug 22 14:40:30 2023 Version 18.3.0.0.0 Copyright (c) 1982, 2018, Oracle. All rights reserved. Connected to: Oracle Database 19c Enterprise Edition Release 19.0.0.0.0 – Production Version 19.16.0.0.0 SQL> show user USER is “SYS” SQL> !hostname PRIM_DB_N1 SQL> !date Tuesday, August 22, 2023 at 2:40:41 PM +04 SQL> alter database add standby logfile group 5 (‘+REDO’,’+RECO’,’+DATA’) size 300M; Database altered. SQL> alter database add standby logfile group 6 (‘+REDO’,’+RECO’,’+DATA’) size 300M; Database altered. SQL> alter database add standby logfile group 7 (‘+REDO’,’+RECO’,’+DATA’) size 300M; Database altered. |

| Gather Temporary File Informationfrom thePRIMARY | SQL> select file_name, bytes from dba_temp_files; SQL> alter session set container=”PDB$SEED”; SQL> select file_name, bytes from dba_temp_files; SQL> alter session set container=”<EBS PDB name>”; SQL> select file_name, bytes from dba_temp_files; |

| Run Database Tier Pre-Clone Scripts on PRIMARY | As the oracle user, run the database pre-clone utility on the primary database server. $ cd $RDBMS_ORACLE_HOME/appsutil/scripts/<context_name> $ perl adpreclone.pl dbTier |

| Copy the ORACLE_HOME and Database from PRIMARY to the Standby Database Server | |

| Generate a Standby Control File on PRIMARY and Copy it to the Standby Database Server (Conditional) | SQL> alter database create standby controlfile as <directory>/<controlfile name>; SQL> alter system switch logfile; SQL> select thread#, sequence#-1 from v$log where status = ‘CURRENT’; Now copy the control file to the standby database server, noting the thread# and sequence# for later use. You will only be able to open the standby database after this log has been applied on the standby. SQL> select thread#, sequence#-1 from v$log where status = ‘CURRENT’; THREAD# SEQUENCE#-1 ———- ———– 1 9906 2 9894 SQL> alter database create standby controlfile as ‘/u01/oradata/Stage/PRD_BKP/SBY/CDBPRD_SBY_controlfile_23AUG23.ctl’; Database altered. SQL> alter system switch logfile; System altered. SQL> select thread#, sequence#-1 from v$log where status = ‘CURRENT’; THREAD# SEQUENCE#-1 ———- ———– 1 9907 2 9894 SQL> Disconnected from Oracle Database 19c Enterprise Edition Release 19.0.0.0.0 – Production Version 19.16.0.0.0 PRODUCTION:oraprod@PRIM_DB_N1:ls -lrth /Stage/BKP/SBY/CDBPRD_SBY_controlfile_23AUG23.ctl -rw-r—– 1 oraprod oinstall 35.1M Aug 23 11:56 /Stage/BKP/SBY/CDBPRD_SBY_controlfile_23AUG23.ctl PRODUCTION:oraprod@PRIM_DB_N1:scp -rp /Stage/BKP/SBY/CDBPRD_SBY_controlfile_23AUG23.ctl oraprod@SBY_DB_N1:/Stage/PRDSBY Authorized users only. All activity may be monitored and reported. Password: CDBPRD_SBY_controlfile_23AUG23.ctl 100% 35MB 18.9MB/s 00:01 PRODUCTION:oraprod@PRIM_DB_N1: Standby Server: PRODUCTION-DR:oraprod@SBY_DB_N1:cd $BKP PRODUCTION-DR:oraprod@SBY_DB_N1:ls -lrth total 120980 -rw-r–r– 1 oraprod oinstall 4.44K Aug 21 20:32 pfile_PRIM_DB_N1_cdb_21082023.ora -rw-r–r– 1 oraprod oinstall 957 Aug 21 20:32 pfile_PRIM_DB_N1_pdb_21082023.ora -rw-r–r– 1 oraprod oinstall 3.48K Aug 22 14:00 initPRDSBY.ora -rw-r—– 1 oraprod oinstall 23.8M Aug 22 15:18 CDBPRD_SBY_controlfile_22AUG23.ctl -rw-r–r– 1 oraprod oinstall 3.63K Aug 22 23:04 initCDBPRDSBY.ora_FS -rw-r–r– 1 oraprod oinstall 3.05K Aug 23 09:58 initCDBPRDSBY.ora_23Aug23 -rw-r–r– 1 oraprod oinstall 3.27K Aug 23 11:23 initCDBPRDSBY.ora -rw-r—– 1 oraprod oinstall 35.1M Aug 23 11:56 CDBPRD_SBY_controlfile_23AUG23.ctl PRODUCTION-DR:oraprod@SBY_DB_N1:pwd /Stage/BKP PRODUCTION-DR:oraprod@SBY_DB_N1:ls -lrth CDBPRD_SBY_controlfile_23AUG23.ctl -rw-r—– 1 oraprod oinstall 35.1M Aug 23 11:56 CDBPRD_SBY_controlfile_23AUG23.ctl PRODUCTION-DR:oraprod@SBY_DB_N1: |

| Configuration of the STANDBY Oracle Home for a Single-Node | $ cd $ORACLE_HOME/appsutil/clone/bin $ perl adcfgclone.pl dbTechStack Enter values, as appropriate for the following prompts: Do you want the inputs to be validated (y/n) [n] ? :n Target Instance is RAC (y/n) [y] :y Target System CDB Name : Target System PDB Name : Do you want to enable SCAN addresses (y/n) [y] ? :y Specify value for Scan Name : Specify value for Scan Port : Target System Base Directory : Target System Port Pool [0-99] : Provide information for the initial RAC node: Host name [] : Virtual Host name [null] : Instance number [1] : Private interconnect name [] : Oracle OS User [oracle] : Oracle OS Group [oinstall] : Target System utl_file_dir Directory List : Number of DATA_TOP’s on the Target System [3] : Target System DATA_TOP Directory 1 [] : Target System DATA_TOP Directory 2 [] : Target System DATA_TOP Directory 3 [] : Target System RDBMS ORACLE_HOME Directory [] : Do you want to preserve the Display [localhost:0.0] (y/n) : n Target System Display [dbsystem:0.0] : |

| Stop the Database Listener on the STANDBY Database Server | $ lsnrctl stop <EBSCDB listener name> PRODUCTION-DR:oraprod@SBY_DB_N1:lsnrctl stop CDBPRD LSNRCTL for Solaris: Version 19.0.0.0.0 – Production on 22-AUG-2023 18:16:41 Copyright (c) 1991, 2022, Oracle. All rights reserved. Connecting to (DESCRIPTION=(ADDRESS=(PROTOCOL=TCP)(HOST=SBY_DB_N1.corp.prod.com)(PORT=1529))) The command completed successfully PRODUCTION-DR:oraprod@SBY_DB_N1: |

| Disable RAC option on STANDBY if building Standby from Primary as RAC to Standby as Single Node Non-RAC | 1. Login as the Oracle software owner and shutdown all database instances (if running). 2. cd $ORACLE_HOME/rdbms/lib 3. make -f ins_rdbms.mk rac_off If this step did not fail with fatal errors then proceed to step 4. 4. make -f ins_rdbms.mk ioracle |

| Configure Oracle Net for Redo Transmission and Start the ListeneronSTANDBY | CDBPROD = (DESCRIPTION_LIST = (DESCRIPTION = (ADDRESS_LIST = (ADDRESS = (PROTOCOL = TCP)(HOST = <standby hostname>)(PORT = <PORT>)(IP = FIRST))) (ADDRESS_LIST = (ADDRESS = (PROTOCOL = TCP)(HOST = <standby Physical-VIP hostname>)(PORT = <PORT>)(IP = FIRST))) ) ) SID_LIST_CDBPROD = (SID_LIST = (SID_DESC = (ORACLE_HOME = [ORACLE_HOME])(GLOBAL_NAME=<standby db_unique_name>)(SID_NAME = <instance name>)) ) USE_SID_AS_SERVICE_CDBPROD = ON STARTUP_WAIT_TIME_CDBPROD = 0 CONNECT_TIMEOUT_CDBPROD = 10 TRACE_LEVEL_CDBPROD = OFF LOG_DIRECTORY_CDBPROD = [ORACLE_HOME]/network/admin LOG_FILE_CDBPROD = STDBY TRACE_DIRECTORY_CDBPROD = [ORACLE_HOME]/network/admin TRACE_FILE_CDBPROD = STDBY ADMIN_RESTRICTIONS_CDBPROD = ON SUBSCRIBE_FOR_NODE_DOWN_EVENT_CDBPROD = OFF Next start the database listener on the standby: $ lsnrctl start <standby listener> |

| Run root.sh on 19c OHofSTANDBY | $ cd $ORACLE_HOME $ ls -l root.sh # sh root.sh — needs to be executed as ROOT user |

| Start the STANDBY Database in nomount | PRODUCTION-DR:oraprod@SBY_DB_N1: sqlplus / as sysdba SQL*Plus: Release 19.0.0.0.0 – Production on Wed Aug 23 12:20:43 2023 Version 19.16.0.0.0 Copyright (c) 1982, 2022, Oracle. All rights reserved. Connected to an idle instance. SQL> startup nomount pfile=’/Stage/BKP/initCDBPRDSBY.ora ORACLE instance started. Total System Global Area 5.3687E+10 bytes Fixed Size 37438264 bytes Variable Size 6308233216 bytes Database Buffers 4.7245E+10 bytes Redo Buffers 96772096 bytes SQL> Disconnected from Oracle Database 19c Enterprise Edition Release Version 19.16.0.0.0 |

| Restore STANDBY Control file | PRODUCTION-DR:oraprod@SBY_DB_N1:rman target / Recovery Manager: Release 19.0.0.0.0 – Production on Wed Aug 23 12:21 Version 19.16.0.0.0 Copyright (c) 1982, 2019, Oracle and/or its affiliates. All rights r connected to target database: CDBPRD (not mounted) RMAN> RESTORE STANDBY CONTROLFILE FROM ‘/Stage/BKP/CDBPRD_SBY_controlfile_23AUG23.ctl’; Starting restore at 23-AUG-23 using target database control file instead of recovery catalog allocated channel: ORA_DISK_1 channel ORA_DISK_1: SID=1 device type=DISK channel ORA_DISK_1: copied control file copy output file name=/u01/PRD/oradata/control01.dbf output file name=/u02/PRD/oradata/control02.dbf output file name=/u03/PRD/oradata/control03.dbf Finished restore at 23-AUG-23 RMAN> ALTER DATABASE MOUNT; released channel: ORA_DISK_1 Statement processed RMAN> select name,open_mode from v$database; NAME OPEN_MODE ——— ——————– CDBPRD MOUNTED RMAN> exit Recovery Manager complete. PRODUCTION-DR:oraprod@SBY_DB_N1: SQL> show pdbs; CON_ID CON_NAME OPEN MODE RESTRICTED ———- —————————— ———- ———- 2 PDB$SEED MOUNTED 3 PRD MOUNTED SQL> select name,open_mode from v$database; NAME OPEN_MODE ——— ——————– CDBPRD MOUNTED SQL> !date Wednesday, August 23, 2023 at 12:24:49 PM +04 SQL> |

| Modify the Database init.ora Parameters on the STANDBY Server | SQL> alter system set <parameter>=<value>; As the ORACLE user on the standby database server, create an ifile for the standby database based on the one created earlier for the primary database: $ cd <ORACLE_HOME>/dbs $ cp <CONTEXT_NAME>_ifile.ora <STNDBY_CONTEXT>_ifile.ora Update the following parameters: DB_UNIQUE_NAME should be updated to a name different from the primary: for example, CDBSTDBY LOG_ARCHIVE_DEST_2 should point to the primary service. This is necessary when the servers are reversed, as the standby is changed to act as the primary and will now ship redo to the new standby. For example, LOG_ARCHIVE_DEST_2 for ‘service=CDBPROD ASYNC REGISTER VALID_FOR=(online_logfile,primary_role) DB_UNIQUE_NAME=CDBPROD’. Add an entry to the standby control file that was created on the primary and now copied to this server :control_files = <control file directory>/<standby control file>, <control file directory>/<standby control file> set linesize 500 pages 500 col value for a90 col name for a50 select name, value from v$parameter where name in (‘db_name’,’db_unique_name’,’log_archive_config’, ‘log_archive_dest_1′,’log_archive_dest_2’, ‘log_archive_dest_state_1′,’log_archive_dest_state_2’, ‘remote_login_passwordfile’, ‘log_archive_format’,’log_archive_max_processes’,’fal_server’,’db_file_name_convert’, ‘log_file_name_convert’, ‘standby_file_management’,’fal_server’,’fal_client’); |

| Run restore and recoveryfor STANDBY Database | SQL> alter database mount; ISSUE / FIX ————— RMAN-00571: =========================================================== RMAN-03002: failure of sql statement command at 08/23/2023 12:13:54 ORA-65093: multitenant container database not set up properly RMAN> exit Recovery Manager complete. SQL> alter system set enable_pluggable_database=true scope=spfile; cleanup restored control file and re-invoke restore and try mounting the database and then invoke restore. rman target / MSGLOG=db_restore_for_dr_buid.log <<EOF run { set newname FOR DATABASE TO ‘/u01/PRD/oradata/%b’; restore database; switch datafile all; } EOF exit Restore Archive and Recover —————————————- rman target / MSGLOG=restore_arch_for_dr_buid.log <<EOF run { recover database; } EOF exit |

| As the oracle user on the standby database server, perform the following after the standby database creation has completed: Set the the CDB environment and mount the standby database. $ . <ORACLE HOME>/<CDB INSTANCE>_<physical hostname1>.env Put the standby database into managed recovery mode on the first node in the cluster. $ sqlplus / as sysdba SQL> alter database recover managed standby database disconnect from session; | |

| Start Shipping Redo from the Primary to the PRIMARY Database Server | $ . <ORACLE HOME>/<CDB INSTANCE>_<physical hostname1>.env $ sqlplus / as sysdba On Primary : alter system set log_archive_dest_3=’SERVICE=PRDSBY LGWR ASYNC VALID_FOR=(ONLINE_LOGFILES,PRIMARY_ROLE) DB_UNIQUE_NAME=PRDSBY’ scope=both sid=’*’; |

| Verify that Redo is Shipping from PRIMARY | SQL> alter system switch logfile; While still on the primary, check the status of the most recently archived redo log file at each of the redo transport destinations. The most recently archived redo log file should be the same at each destination. If it is not, a status other than VALID may identify an error was encountered during the archival operation to that destination. Resolve any errors before continuing. SQL> select * from v$archive_dest_status where status != ‘INACTIVE’; On each database server, the following query will show which logs have been sent/received and applied: SQL > select sequence#, applied, to_char(first_time, ‘mm/dd/yy hh24:mi:ss’) first from v$archived_log order by first_time; On the standby database server, monitor the database alert log to review the recovery progress. |

| Add the Temp Files to the STANDBY Database (Conditional) – This requires ADG license option | Execute the following commands: SQL> alter database recover managed standby database cancel; SQL> alter database open read only; SQL> alter tablespace temp add tempfile ‘ <file spec>’ size <size> reuse; [enter as many lines as you have temporary data files] SQL> shutdown immediate; SQL> startup mount; SQL> alter database recover managed standby database disconnect from session; |

Important Commands for Standby

| Basic information of database (primary or standby) | SELECT DATABASE_ROLE, DB_UNIQUE_NAME INSTANCE, OPEN_MODE, PROTECTION_MODE, PROTECTION_LEVEL, SWITCHOVER_STATUS FROM V$DATABASE; select status,instance_name,database_role,protection_mode from v$database,v$instance; |

| Check for messages/errors | SELECT MESSAGE FROM V$DATAGUARD_STATUS; |

| To display current status information for specific physical standby database background processes. | SELECT PROCESS, STATUS, THREAD#, SEQUENCE#, BLOCK#, BLOCKS FROM V$MANAGED_STANDBY ; |

| Show received archived logs on physical standby (Run this query on physical standby) | select registrar, creator, thread#, sequence#, first_change#, next_change# from v$archived_log; |

| To check the log status | select ‘Last Log applied : ‘ Logs, to_char(next_time,’DD-MON-YY:HH24:MI:SS’) Time from v$archived_log where sequence# = (select max(sequence#) from v$archived_log where applied=’YES’) union select ‘Last Log received : ‘ Logs, to_char(next_time,’DD-MON-YY:HH24:MI:SS’) Time from v$archived_log where sequence# = (select max(sequence#) from v$archived_log); |

| To display various information about the redo data. This includes redo data generated by the primary database that is not yet available on the standby database and how much redo has not yet been applied to the standby database. | set lines 132 col value format a20 select name, value from V$DATAGUARD_STATS; |

| To monitor efficient recovery operations as well as to estimate the time required to complete the current operation in progress: | select to_char(start_time, ‘DD-MON-RR HH24:MI:SS’) start_time,item, round(sofar/1024,2) “MB/Sec” from v$recovery_progress where (item=’Active Apply Rate’ or item=’Average Apply Rate’); |

| To find last applied log | select to_char(max(FIRST_TIME),‘hh24:mi:ss dd/mm/yyyy’) FROM V$ARCHIVED_LOG where applied=’YES’; |

| To see if standby redo logs have been created. The standby redo logs should be the same size as the online redo logs. There should be (( # of online logs per thread + 1) * # of threads) standby redo logs. A value of 0 for the thread# means the log has never been allocated. | SELECT thread#, group#, sequence#, bytes, archived, status FROM v$standby_log order by thread#, group#; |

| To produce a list of defined archive destinations. It shows if they are enabled, what process is servicing that destination, if the destination is local or remote, and if remote what the current mount ID is. For a physical standby we should have at least one remote destination that points the primary set. | column destination format a35 wrap column process format a7 column ID format 99 column mid format 99 SELECT thread#, dest_id, destination, gvad.status, target, schedule, process, mountid mid FROM gv$archive_dest gvad, gv$instance gvi WHERE gvad.inst_id = gvi.inst_id AND destination is NOT NULL ORDER BY thread#, dest_id; |

| Verify the last sequence# received and the last sequence# applied to standby database. | SELECT al.thrd “Thread”, almax “Last Seq Received”, lhmax “Last Seq Applied” FROM (select thread# thrd, MAX(sequence#) almax FROM v$archived_log WHERE resetlogs_change#=(SELECT resetlogs_change# FROM v$database) GROUP BY thread#) al, (SELECT thread# thrd, MAX(sequence#) lhmax FROM v$log_history WHERE resetlogs_change#=(SELECT resetlogs_change# FROM v$database) GROUP BY thread#) lh WHERE al.thrd = lh.thrd; SELECT al.thrd “Thread”, almax “Last Seq Received”, lhmax “Last Seq Applied” FROM (select thread# thrd, MAX(sequence#) almax FROM v$archived_log WHERE resetlogs_change#=(SELECT resetlogs_change# FROM v$database) GROUP BY thread#) al, (SELECT thread# thrd, MAX(sequence#) lhmax FROM v$log_history WHERE resetlogs_change#=(SELECT resetlogs_change# FROM v$database) GROUP BY thread#) lh WHERE al.thrd = lh.thrd; |

| Query to apply redo log on standby. Following command useful for real time apply | ALTER DATABASE RECOVER MANAGED STANDBY DATABASE USING CURRENT LOGFILE; |

| To Start the MRP process using archive log | alter database recover managed standby database disconnect from session; |

| Cancel the MRP Process | alter database recover managed standby database cancel; |

| Status of MRP Process | select process,status,client_process,thread#,sequence#,block#,blocks,delay_mins from v$managed_standby; |

| To get the difference between Received and applied logs. | select MAX_RECEIVED,MAX_APPLIED,(MAX_RECEIVED – MAX_APPLIED) as “Difference” from (SELECT ARCHIVED_THREAD#, MAX(ARCHIVED_SEQ#) as MAX_RECEIVED, APPLIED_THREAD#, MAX(APPLIED_SEQ#) as MAX_APPLIED FROM V$ARCHIVE_DEST_STATUS) ARCH ; |

| Check the difference in sequence | SELECT ARCH.THREAD# “Thread”, ARCH.SEQUENCE# “Last Sequence Received”, APPL.SEQUENCE# “Last Sequence Applied”, (ARCH.SEQUENCE# – APPL.SEQUENCE#) “Difference” FROM (SELECT THREAD# ,SEQUENCE# FROM V$ARCHIVED_LOG WHERE (THREAD#,FIRST_TIME ) IN (SELECT THREAD#,MAX(FIRST_TIME) FROM V$ARCHIVED_LOG GROUP BY THREAD#)) ARCH, (SELECT THREAD# ,SEQUENCE# FROM V$LOG_HISTORY WHERE (THREAD#,FIRST_TIME ) IN (SELECT THREAD#,MAX(FIRST_TIME) FROM V$LOG_HISTORY GROUP BY THREAD#)) APPL WHERE ARCH.THREAD# = APPL.THREAD# ORDER BY 1; |

References

| https://docs.oracle.com/en/database/oracle/oracle-database/19/sbydb/creating-oracle-data-guard-physical-standby.html#GUID-B511FB6E-E3E7-436D-94B5-071C37550170 – Creating a Physical Standby Database https://docs.oracle.com/en/database/oracle/oracle-database/19/sbydb/index.html#Oracle%C2%AE-Data-Guard – Concepts and Administration https://docs.oracle.com/en/database/oracle/oracle-database/19/sbydb/sql-statements-used-by-oracle-data-guard.html#GUID-F690B602-6582-465B-8E86-0B0A52838D66 – SQL Statements Relevant to Oracle Data Guard https://docs.oracle.com/en/database/oracle/oracle-database/19/dgbkr/index.html#Oracle%C2%AE-Data-Guard – DGB Business Continuity for Oracle E-Business Suite Release 12.2 on Oracle Database 19c Using Physical Host Names (Doc ID 2617787.1) Using Active Data Guard Reporting with Oracle E-Business Suite Release 12.2 and Oracle Database 19c (Doc ID 2608030.1) Creating a Physical Standby database using RMAN restore database from service (Doc ID 2283978.1) 12c Create Dataguard Broker Configuration – DGMGRL (Doc ID 1583588.1) http://www.br8dba.com/dg-broker-configuration/ – How to setup Data Guard Broker Configuration |

Feel free to share feedback on farhan20@gmail.com